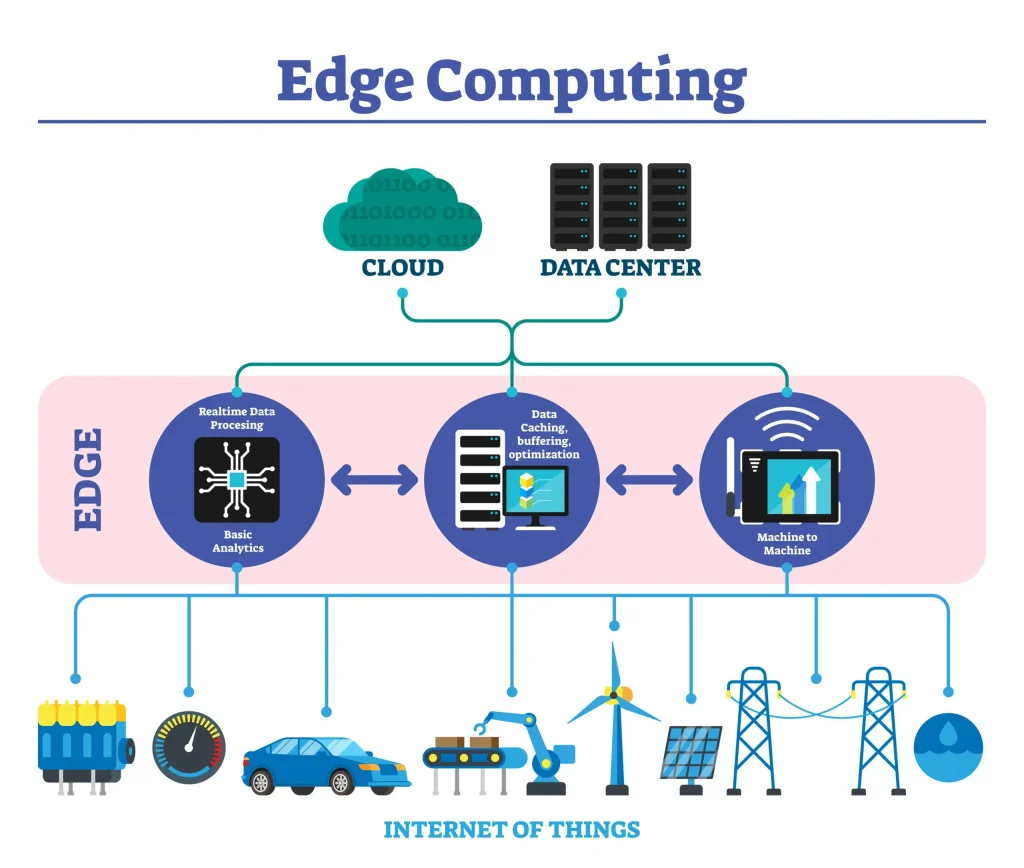

Edge computing sits at the heart of modern digital infrastructure, moving computation closer to devices and sensors to cut latency and enable real-time action. A well-designed edge computing architecture blends intelligence at the edge with practical realities, pairing edge AI and edge data processing to push insights toward the source while preserving context, reducing dependency on central servers, and improving response times across disparate environments. This approach delivers edge computing benefits such as lower bandwidth requirements, faster decision cycles, and enhanced privacy for sensitive data, while enabling resilient operations even when connectivity to the cloud is intermittent, a scenario common in manufacturing floors, remote sites, or mobile deployments. Rather than treating cloud and edge as mutually exclusive, many organizations pursue a hybrid model that balances edge vs cloud, leveraging fast local processing for immediacy and the scalability of central resources for analytics and archival workloads, guided by latency, bandwidth, and governance considerations. As industries adopt these practices, the edge-centric paradigm emerges as a foundational element of digital transformation, driving smarter devices, safer operations, and more responsive customer experiences.

Viewed through an LSI lens, many terms point to the same trend without relying on a single label. You’ll hear references to distributed computing near the data source, edge-enabled analytics, and on-device inference as variants of the same idea. Other phrases such as fog computing or localized data processing emphasize local processing, proximity to sensors, and reduced central network traffic. Together, these terms reinforce a design pattern that prioritizes latency reduction, privacy, and resilience, while allowing hybrid architectures that blend local processing with cloud-scale analytics. In practice, organizations map these concepts to concrete deployments—from micro data centers to edge gateways—creating a cohesive edge-first strategy without being limited by a single label.

Edge Computing Architecture: Enabling Real-Time Intelligence at the Edge

A robust edge computing architecture brings compute, storage, and analytics closer to data sources by organizing layered components such as edge devices, gateways, and micro data centers. This structure reduces data travel time, enabling near-instant responses for time-sensitive applications and creating the foundation for edge AI to run inference locally. Through edge data processing, raw streams can be filtered and summarized at the source, preserving bandwidth for only the most valuable insights.

Designing with a clear edge computing architecture also supports secure, scalable operations. The distributed nature of these layers allows for offline resilience and modular expansion across multiple sites. When cloud resources are used, the edge vs cloud distinction becomes a deliberate balance, with real-time tasks handled at the edge while heavy analytics and long-term storage occur in the central cloud as needed.

Practical Adoption of Edge AI and Edge Data Processing for Scalable Outcomes

Edge AI and edge data processing bring intelligence directly to the source—on gateways or micro data centers—enabling autonomous decisions in environments like manufacturing, retail, and transportation. Running ML models at the edge reduces bandwidth usage, lowers latency, and enhances privacy by keeping sensitive data localized, all hallmarks of the edge computing benefits and the broader edge data processing paradigm.

A practical adoption path starts with a clear business outcome, maps data flows, and pilots a single use case before scaling. Key considerations include data locality, security at the edge, and interoperability across heterogeneous devices. As organizations mature, edge computing acts as a complement to the cloud, informing edge vs cloud strategies that optimize performance, cost, and resilience while maintaining centralized orchestration for governance and long-term analytics.

Frequently Asked Questions

What is edge computing architecture, and what are the core edge computing benefits for real-time applications?

Edge computing architecture places compute resources closer to data sources, distributing roles across edge devices, gateways, and micro data centers with optional central cloud as needed. In this architecture, edge data processing and local analytics enable real-time insights without sending raw data to the cloud. The edge computing benefits include lower latency, reduced bandwidth usage, offline resilience, and improved privacy. Many deployments pair the edge architecture with cloud services to handle long‑term storage and centralized analytics when appropriate.

How does edge vs cloud compare when using edge AI and edge data processing to achieve low latency and data privacy?

In an edge vs cloud setup, edge AI runs machine learning models directly on edge devices or gateways, enabling fast, local decisions. Pairing this with edge data processing to filter and summarize data at the source reduces cloud traffic and enhances privacy. This hybrid approach supports low latency, scalable deployment, and resilience. A practical path is to pilot at a single site, then expand with an orchestration layer that coordinates workloads across edge and cloud.

| Key Point | Description |

|---|---|

| What is Edge Computing and Why It Matters | Edge computing moves processing closer to data sources, reducing latency and bandwidth, enabling real-time insights; contrasts with traditional cloud-centric models. |

| Core Components of Edge Computing Architecture | Edge devices, edge gateways, micro data centers, central cloud (optional), and middleware/orchestration. |

| Edge AI and Edge Data Processing | Edge AI runs ML models on devices/gateways for local decisions; edge data processing filters and aggregates data at the source to save bandwidth and improve privacy. |

| Benefits | Lower latency, bandwidth savings, reliability, privacy, and scalable, modular deployment. |

| Edge vs Cloud: Complementary Relationship | Hybrid architectures leverage both edge and cloud, with MEC enabling telecom-grade edge resources alongside cloud services. |

| Practical Use Cases Across Industries | Industrial automation, autonomous systems, retail/smart cities, healthcare devices, energy/utilities. |

| Architectural Patterns and Implementation Considerations | Data locality, security at the edge, heterogeneous environments, operational management, and cost considerations. |

| Roadmap for Starting an Edge Computing Initiative | Define outcomes, map data flows, choose architecture, pilot, build orchestration, and expand. |

| Challenges and Tradeoffs to Anticipate | Security at multiple edge points, device heterogeneity, operational complexity, and cost considerations. |

Summary

Edge computing reshapes how organizations design and deploy modern technology infrastructure, enabling closer-to-source processing and faster decision-making, and edge computing capabilities empower real-time insights across industries. This descriptive overview highlights edge computing architecture, the practical benefits of edge AI and edge data processing, and how a hybrid edge-cloud approach supports real-time intelligence across industries. By balancing latency, bandwidth, privacy, and scalability, edge computing powers smarter devices and resilient operations as organizations pursue digital transformation.