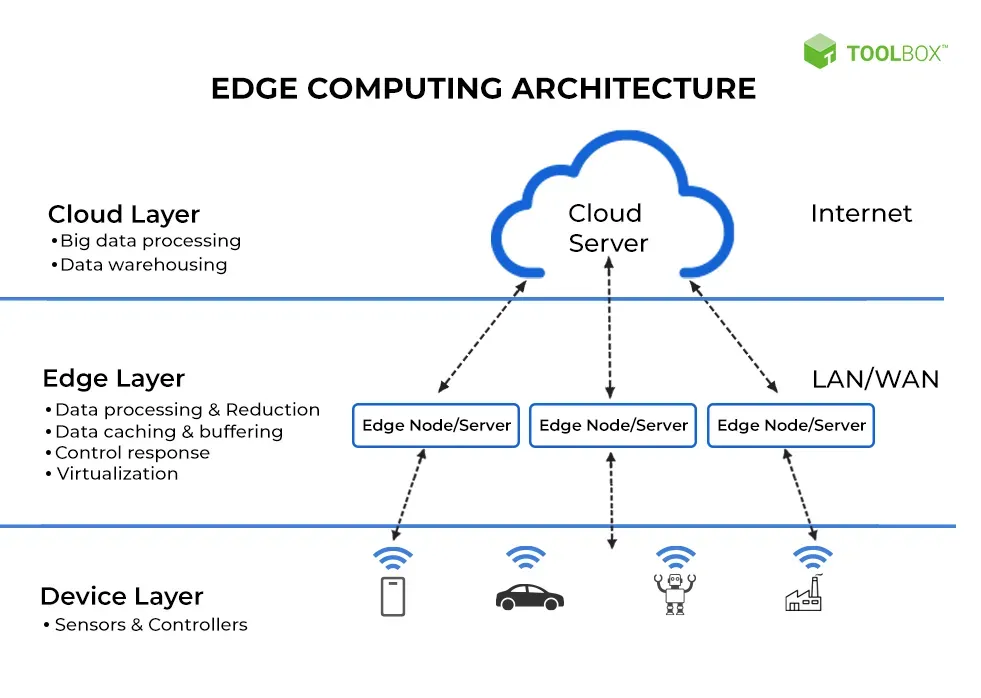

Cloud to Edge infrastructure marks a pivotal shift in how organizations deploy and manage apps, fusing cloud-scale flexibility with edge responsiveness. This evolution reframes traditional architectural questions as businesses seek to balance centralized control with distributed capability. It enables data processing at the edge, bringing analytics and AI inference closer to data sources for faster decisions. The approach offers tangible benefits like lower latency, reduced bandwidth needs, and improved privacy by processing sensitive data locally. A pragmatic path forward blends on-site and cloud resources to optimize real-time workloads across distributed environments.

In practical terms, organizations are adopting a distributed compute model that moves processing toward the data sources at near-edge locations. This approach leverages concepts like edge-native architectures, fog computing, and on-device processing to reduce round trips and boost responsiveness. By combining local inference, micro data centers, and selective cloud analytics, teams can balance scale with latency-sensitive requirements. LSI-driven strategies emphasize semantic relationships—treating data locality, security, and governance as core themes alongside optimization, resilience, and interoperability. In short, the shift from centralized data centers to a near-edge, distributed ecosystem represents an evolution of technology infrastructure that aligns fast insights with scalable cloud services.

1) Cloud to Edge Infrastructure: Realizing Hybrid Cloud Edge Architecture for Latency-Sensitive Workloads

Cloud to Edge infrastructure blends cloud scale with edge locality to distribute compute and storage across centralized data centers and local edge sites. This hybrid approach supports latency-sensitive workloads and data sovereignty needs, aligning with the ongoing evolution of technology infrastructure. By pairing cloud computing with edge resources, organizations can place workloads where they perform best—near the data source—while maintaining cloud-based governance and orchestration for broader analytics.

Key architectural patterns emerge from this model, including a hybrid cloud edge architecture, edge-native microservices, and data pipelines with tiered storage. Local pre-processing reduces bandwidth requirements and speeds up decision-making, while aggregated data can still be moved to the cloud for long-term analytics and model refinement. Emphasizing observability and governance at scale ensures reliability and compliance across distributed resources, underscoring how the Cloud to Edge approach can be implemented in a structured and repeatable way.

2) Data Processing at the Edge: Enabling Real-Time Analytics and AI Inference at the Edge

Data processing at the edge empowers streaming analytics, AI inference, and event-driven processing right where data is generated. This capability enables real-time decision-making in manufacturing, autonomous systems, and customer experiences, demonstrating the edge’s clear advantages in latency reduction, bandwidth savings, and privacy preservation.

Realizing these benefits requires robust security, governance, and interoperability across distributed environments. Organizations should invest in hardware-backed security, encryption, zero-trust principles, and supply-chain controls while adopting a hybrid cloud edge mindset that balances cloud-scale analytics with edge immediacy. Across industries—from manufacturing to healthcare and smart cities—the focus remains on optimizing performance and cost, while navigating the cloud computing vs edge computing trade-offs as part of the evolution of technology infrastructure.

Frequently Asked Questions

How does Cloud to Edge infrastructure relate to cloud computing vs edge computing, and why is a hybrid cloud edge architecture often the best approach?

Cloud to Edge infrastructure blends cloud scalability with the low-latency benefits of edge computing, enabling workloads to run where they make the most sense. It complements the concepts of cloud computing vs edge computing rather than replacing them, with a hybrid cloud edge architecture that places processing based on latency, data locality, and governance. Data processing at the edge—such as real-time analytics and AI inference—reduces round-trips and bandwidth needs, delivering faster decisions while preserving cloud-scale capabilities as part of the evolution of technology infrastructure.

What are the key use cases and security considerations for Cloud to Edge infrastructure, and how does data processing at the edge unlock benefits like edge computing advantages?

Key use cases span manufacturing and industrial IoT, healthcare, smart cities, and retail, where data processing at the edge enables real-time responses, predictive maintenance, and local decisioning. Edge computing advantages include low latency, improved privacy, offline resilience, and reduced bandwidth. Security and governance are essential: hardware-based security, secure boot, encryption at rest and in transit, zero-trust access, immutable infrastructure, and robust software supply chain controls, all managed through centralized observability to address the evolution of technology infrastructure.

| Key Point | Description |

|---|---|

| Evolution of Technology Infrastructure | From on-prem data centers to cloud services and now edge computing; distributed, low-latency ecosystems that support autonomous operation. |

| Cloud to Edge infrastructure concept | Fuses cloud scalability with edge immediacy; shifts focus to latency, data strategy, and governance. |

| Hybrid cloud edge architectures | Distributes workloads across cloud and edge with a unified management plane; initial processing at the edge, cloud for deeper analytics. |

| Architectural patterns | Hybrid cloud edge; Edge-native microservices; Data pipelines with tiered storage; Observability and governance at scale. |

| Data processing at the edge | Edge devices and edge servers perform streaming analytics and AI inference locally for real-time decisions; reduces latency. |

| Use cases across industries | Manufacturing/Industrial IoT, Healthcare, Smart cities/transportation, Retail/hospitality, Telecommunications. |

| Security and compliance considerations | Hardware security, encryption, zero-trust, immutable infrastructure, and robust policy enforcement across cloud and edge. |

| Operational best practices | Standardization, IaC, centralized observability, continuous optimization, and governance and skills development. |

| Future trends and outlook | 5G, AI at the edge, software-defined infrastructure, dynamic workload placement, and seamless orchestration. |

Summary

Cloud to Edge infrastructure enables organizations to balance latency, privacy, and scale across distributed environments by blending edge immediacy with cloud capabilities. By adopting hybrid architectures, data processing at the edge, and robust security, teams can achieve real-time insights, resilience, and governance. The patterns outlined—hybrid cloud edge, edge-native microservices, tiered data pipelines, and end-to-end observability—provide a practical blueprint for modern workloads. Industries such as manufacturing, healthcare, smart cities, retail, and telecommunications can benefit from localized analytics, but must navigate security and governance challenges. As technology evolves with 5G, AI at the edge, and scalable orchestration, Cloud to Edge infrastructure will continue to unlock faster decision-making and more agile business outcomes.