Natural Language Processing, or NLP, sits at the crossroads of linguistics, computer science, and cognitive science as it helps machines understand human language. At its core, NLP blends rules and learning to move beyond simple keyword matching toward intuitive understanding of text, speech, and intent. The topic touches on practical tasks like tokenization, part-of-speech tagging, and sentiment analysis, and it expands into applications across many industries. By tracing advances from rule-based systems to neural networks, the field reveals both notable achievements and ongoing work toward more human-like interpretation. This overview highlights core ideas, practical use cases, and the evolving role of NLP technology in everyday tools.

Viewed from another angle, the topic resembles computational linguistics and language-aware AI, where software derives meaning from context rather than rigid rules. By emphasizing semantic relationships, co-occurrence patterns, and contextual representations, we describe language technology in terms of machine interpretation and contextual understanding. LSI-inspired framing uses latent associations and semantic neighborhoods to link related ideas across documents and conversations. Together, these terms and relationships guide practitioners in designing systems that understand user intent, respond coherently, and adapt across languages and domains.

Natural Language Processing: From Theory to Real-World NLP Technology

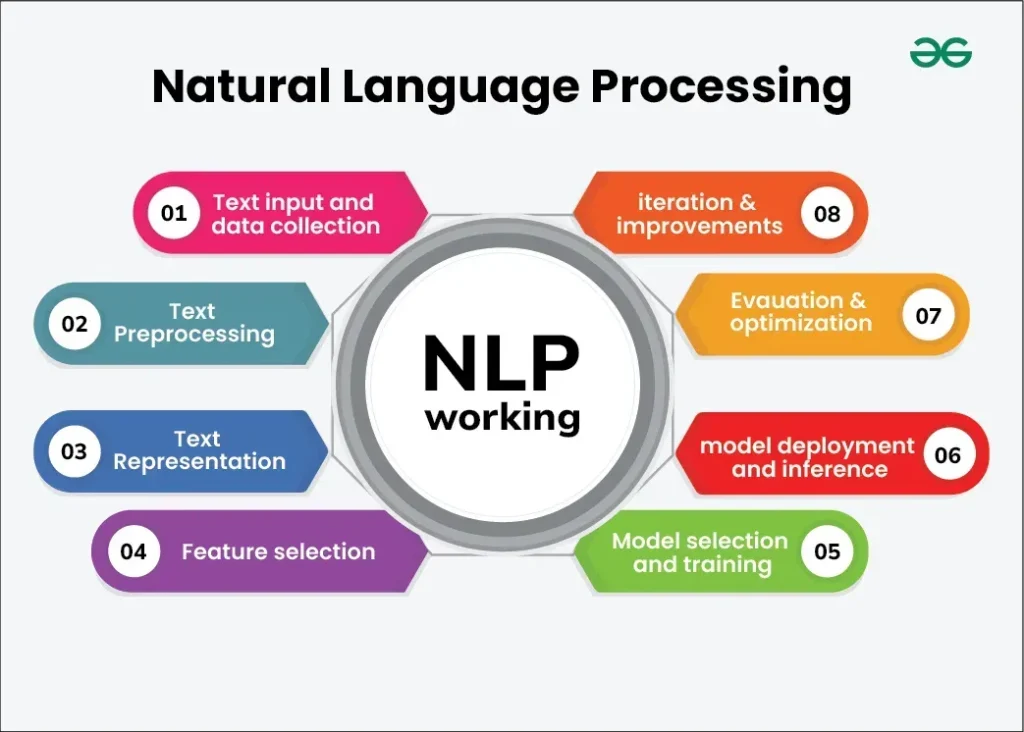

Natural Language Processing sits at the crossroads of linguistics, computer science, and cognitive science, aiming to translate messy human language into signals machines can act on. In practice this means a pipeline of tasks: tokenization to break text into words and punctuation; part-of-speech tagging to identify word roles; and dependency parsing to reveal how words relate. Named entity recognition spots people, places, and organizations; sentiment analysis captures tone; and machine translation bridges languages. While each component matters, the strongest capabilities emerge when they are learned as a coherent system that generalizes from data. This is where NLP technology moves beyond static rules toward adaptive pipelines that improve with experience, bringing the promise of machine understanding closer to real-world NLP applications such as search, customer support, and content analysis.

At the heart of today’s NLP is learning from large corpora. Word embeddings and contextual representations help machines grasp semantic relationships beyond surface text. Transformer models like BERT and GPT enable long-range context and are fine-tuned for specific tasks such as information retrieval, question answering, or text classification. This is the core of natural language processing applications: models that understand intent, resolve pronouns, and generate coherent responses for customer service, content creation, and assistive technologies. The synergy of model architecture and data makes NLP technology valuable in practice, accelerating progress from word matching to nuanced understanding that informs real-world tools.

NLP Technology in Practice: Applications, Challenges, and Future Trends

The scope of NLP spans many industries and use cases. In search and information retrieval, NLP helps engines understand user queries and deliver relevant results even when phrasing differs from indexed content. In the realm of communication, chatbots and virtual assistants rely on robust language understanding to carry on natural conversations with users. In content analysis, text analytics can reveal topics, trends, sentiment, and other insights across large datasets. Speech recognition, a closely related area, converts spoken language into text with high accuracy, enabling automated transcription, voice interfaces in cars and phones, and accessibility tools for people with disabilities. When combined with NLP, speech recognition pipelines can deliver end-to-end experiences that feel intuitive and responsive. The practical value of natural language processing applications is evident in everything from enterprise workflows to consumer apps, and it continues to expand as models become more capable.

Yet NLP technology is not without its challenges. Language is inherently ambiguous and context dependent. Words can have multiple meanings, and the same sentence may convey different intents in different situations. Pragmatics, sarcasm, slang, and domain-specific jargon can stump even advanced models. Bias in training data can lead to unfair or erroneous outputs, and privacy concerns demand careful governance when handling language data that may reveal sensitive information. Building reliable NLP systems requires careful data curation, transparent evaluation, and ongoing monitoring. It also calls for a thoughtful balance between accuracy and efficiency, since large models demand significant compute resources and energy. For many organizations, on-device NLP or model distillation techniques offer pathways to faster, privacy-preserving solutions without sacrificing too much performance, aligning with responsible deployment of NLP technology.

Frequently Asked Questions

What is NLP technology and how does it enable machine understanding in real-world tasks?

NLP technology comprises methods that turn messy natural language into structured signals computers can act on. It enables machine understanding by combining tokenization, POS tagging, dependency parsing, NER, sentiment analysis, and translation, often via transformer models (e.g., BERT, GPT). This enables natural language processing applications such as search, chatbots, text analytics, and, in many pipelines, speech recognition.

What are common natural language processing applications that benefit from advances in text analytics and speech recognition?

Common natural language processing applications include information retrieval, chatbots, sentiment analysis, and machine translation. Text analytics surfaces topics, trends, and sentiment from large datasets, while speech recognition converts spoken language into text for transcription and voice interfaces. Advances in transformer models support these NLP applications with better accuracy and multilingual capabilities.

| Topic | Key Points |

|---|---|

| What NLP is | An interdisciplinary field (linguistics, computer science, cognitive science) that bridges human language and machine processing; aims to move beyond word matching toward genuine understanding. |

| Core tasks | Tokenization, POS tagging, dependency parsing, named entity recognition, sentiment analysis, and machine translation. |

| Learning approach | Learning from large corpora with word embeddings and contextual representations; transformers enable long-range context; pre-trained models (e.g., BERT, GPT) adapt to many tasks. |

| Applications | Information retrieval, chatbots, content analytics, speech recognition; NLP enables end-to-end experiences when integrated with other technologies. |

| Scope | Spans industries and use cases like search, communication, content analysis, speech-to-text, accessibility, and enterprise/customer applications. |

| Challenges | Language ambiguity and context dependence; pragmatics, sarcasm, slang, bias in data, privacy concerns, and computational/resource demands. |

| Evaluation | Quantitative metrics (precision, recall, F1); generation/translation metrics (BLEU, ROUGE); plus human evaluation, A/B testing, and human-in-the-loop approaches. |

| Future directions | Multilingual NLP, low-resource languages, on-device AI, multimodal NLP (text with images/audio/video), and improved interpretability and controllability. |

| Practitioner guidance | Define clear business objectives, assemble quality labeled data, enforce governance to protect privacy and reduce bias, monitor data drift, and combine automation with human oversight. |